Preface

After a long time, I have decided to resurrect the mathematics part of this blog! I started Diversions in Mathematics as a way for me to try to explain mathematics to the general public. This continues to be the main goal of this series of blogposts — for a more detailed introduction, please read the introductory remarks. I wrote two posts in this series, but then abruptly stopped. Part of the reason was that my studies got in the way, but I was also unsure exactly what material to present, and how to present it. I wrote down some of my thoughts on this matter in a previous update post.

One of the main issues as a writer is to consider the readers’ background in mathematics. For posts targeting the general public (like the previous two Diversions), I have tried to assume as little as possible while maintaining the discussion at an intelligent level, i.e. without “dumbing down” anything) However, this already assumes familiarity with many mathematical concepts taught in high school, or at least, some level of maturity in regard to abstract reasoning. Consequently I have decided to relaunch Diversions in Mathematics with high school mathematics as a foundation.

In this blogpost, I will introduce the Cauchy-Schwarz inequality, one of the most fundamental results in mathematical analysis, with the aim of connecting various topics that are typically studied in the Year 12 HSC maths curriculum in NSW. The main article is below.

Equations vs. Inequalities

Much of the high school maths curriculum, for better or worse, is devoted to methods of solving equations. Do the following exercises ring a bell?

Homework Exercise (due on Monday): For each of the following equations, find all solutions in the real numbers.

I hope this doesn’t induce traumatic memories. As an unfortunate consequence, I suspect that many people have the impression that maths is just about solving equations. This is far from the truth! I am rather fond of this cartoon from Ben Orlin:

The cartoon comes from this article, which I recommend to read.

Of course, learning to solve equations is extremely important and useful. However, the key point I want to raise is that tackling inequalities often requires a set of tools that is quite different from those used to solve equations. A solution to an algebraic equation, as in my hypothetical homework exercise above, is simply a number. This is a quantitative statement: we are affirming that when the variable is equal to a certain number, it satisfies the desired formula. On the other hand, the study of inequalities gives the opportunity to consider qualitative statements. For example, if

is a given function, then the statement

for all

says that the absolute value of the output is bounded above by 1, for real number input

. An equivalent statement is given by

for all

It is conceivable that we don’t know the value of for some input, or even for many inputs

. For example,

could be the solution to a differential equation that is extremely difficult or impossible to solve by hand, and hence we can only estimate the values. Depending on the situation, we may not even care to know the precise values of the function. We could fill many blogposts with discussions on the utility of qualitative information in many aspects of science and humanities. Instead, I want to focus on just one famous inequality in this blogpost. Before we see its proper form, let’s discuss positivity.

Positivity

Given any expression involving an inequality sign , we can easily transform it into a statement about positivity: the fundamental principle is that if

and

are quantities such that

, then this is equivalent to the statement that

, i.e.

is positive. [Before we continue, a quick note on terminology. Our convention differs slightly from other texts, where positive means

. However, I prefer to avoid double negatives such as “non-negative”. Hence, if

, we will say that “

is strictly positive” to emphasise the difference from

].

The basis of many inequalities is the following fact:

(for all real numbers )

Since this is true for all real numbers, we can substitute any other expression for a real number in place of to obtain many other inequalities. This is a common algebraic trick that ought to be familiar to many students.

As a simple but important example, consider substituting in place of

. Then we can expand out the brackets to obtain

, which implies the following classic inequality:

If are positive numbers, we can replace

by their square roots

in the above inequality, rearrange a little, and obtain

This is a special case of another famous result, namely the arithmetic-geometric mean inequality (often abbreviated to AGM inequality). Now we are ready to introduce the Cauchy-Schwarz inequality in its simplest form.

Let

This is definitely an unwieldy statement — we will see a dramatic improvement towards the end of this blogpost! We will prove this result by induction (another important HSC topic). However, it is worth examining some simple cases first.

In this case where , there is almost nothing to prove. The summations reduce to single terms: we have

on the left hand side and

on the right. However, recall that if

is any real number, then

So the right hand side of the Cauchy-Schwarz inequality is equal to , and we can clearly see that this is larger than or equal to

.

For , the Cauchy-Schwarz inequality reads

Suddenly, things are not so obvious! How could we demonstrate the truth of this inequality? The presence of squares and the way that the terms are paired up on the left hand side suggests that we use our starred inequality from earlier. Then we obtain

Unfortunately, this is not quite right: the right hand side is a bit too large. Indeed, upon a moment’s reflection, perhaps you spot the connection to the AGM inequality . By setting

and

, we deduce

Clearly we need to look elsewhere… but we need not go too far. Recall that the simple AGM inequality above was a consequence of followed by various clever substitutions. Could we not deduce the

case of the Cauchy-Schwarz inequality by considering the positivity of a certain quantity?

As an initial investigation, let us square both sides of the Cauchy-Schwarz inequality and expand out the brackets:

At first glance, this appears to have made things worse, but fortunately, many terms cancel out. We find that the above inequality is equivalent to

At this point, it is hopefully clear that lurking in the background is the positive quantity

We can now prove the Cauchy-Schwarz inequality in the case where . Starting from inequality (2b), this immediately implies (2a). Adding

to both sides of (2a) yields (1b), which can be factorised to produce (1a). Finally, since both sides of (1a) are positive, we may take the square root of both sides, and using the fact that any number is always smaller than its absolute value, we conclude

which is the desired result. Observe that we have discovered the steps in the backwards order! This can happen when proving inequalities — very often, a lot of investigation “around” the problem is required before the solution strategy becomes clear.

Inductive proof of Cauchy-Schwarz

It is about time we tackle the general case. We will proceed by mathematical induction, which is a staple of the HSC examinations (and does not involve electromagnetism). As it turns out, the discussion above has given us all the tools needed to complete the proof!

Base case:

In this case, the Cauchy-Schwarz inequality reduces to a statement about real numbers and their absolute values:

and we have verified the truth of this statement in the previous section.

Induction step

Now assume that the Cauchy-Schwarz inequality holds for some integer . We will show that it holds for

; that is, we will prove

Since we have assumed the result to be true for terms, it follows that

At this point, you should be wondering, how can we get the to appear inside the square roots? This seems like a very messy operation. However, upon further reflection, you would probably stumble across the idea of using the

case of the Cauchy-Schwarz inequality, which we already proved in the previous section! Notice that the right hand side of the result directly above is of the form

, where

and

are the somewhat unwieldy square roots of sums. We know already that

and consequently

This completes the inductive step, and hence proves the Cauchy-Schwarz inequality.

An invitation to a geometric proof

The proof of the Cauchy-Schwarz inequality we have just developed is purely algebraic: as a setup, we used nothing more than a few elementary inequalities, and the conclusion was achieved using the power of inductive reasoning. The x’s and y’s appearing in the inequality can be taken as arbitrary real numbers. However, as I remarked above already, the square root of the sum of squares that appears on the right hand side of the Cauchy-Schwarz inequality is rather unwieldy algebraic expression. Surely, you suspect, there must be something deeper. That expression must have a neater interpretation?

If you suspected this, then you would be absolutely right: the Cauchy-Schwarz inequality is most useful in the context of vectors.

Remark 3.2. The study of vectors has been introduced to the Extension 2 mathematics syllabus in NSW only this year (2020), although it has been in the mathematics syllabus in the Victoria and South Australia examinations for some time already.

Vectors are the foundational tool for mathematics in higher dimensions, and vector spaces are the natural setting for many problems throughout mathematics and hence in all areas of science and engineering. Although the concept of a vector space in general is quite abstract, there is a particular example that is familiar to everyone: the 3-dimensional space we live in. Every point in 3-dimensional space can be described by a set of 3 real numbers — its coordinates — denoted by , where

is an arbitrarily chosen reference point called the origin. In a similar way, mathematicians have no difficulty thinking about points in

-dimensional space, which are of course described by a set of

coordinates,

.

In this setting, a vector can be represented as an arrow connecting two points in the space. A vector that is drawn from the origin is called a position vector, since it specifies uniquely the position of some point, say . Then we can label the vector with the same coordinates as we used for the point

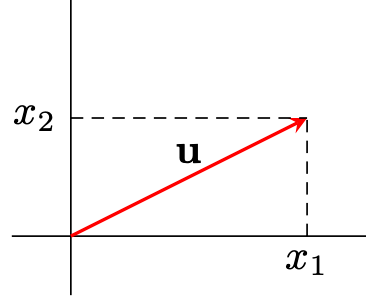

. In the picture below, we have a position vector

in 2D space (the plane) corresponding to the point

with coordinates

. The vector is often written with square brackets

to distinguish it from the point

(although this is not universally enforced).

One of the fundamental properties of a vector is its length, or magnitude or norm (the last term is used in more abstract situations). We denote the length of a vector using the symbol

. For position vectors in the plane, it is easy to see that the length can be calculated using Pythagoras’ theorem. If

, then

The generalisation to an arbitrary number of dimensions is quite natural:

Thus, given two vectors and

, the right hand side of the Cauchy-Schwarz inequality is simply

, the product of the lengths of

and

.

Naturally, you may wonder if we can interpret the left hand side in terms of vectors. Indeed, it does appear that there is some kind of “product” operation going on: notice that the left hand side is obtained by taking the product of each pair of coordinates

, and then summing up everything. As we will see in the next blogpost, such an operation is called a dot product, which is a special case of the very general concept of an inner product on a vector space. The Cauchy-Schwarz inequality demonstrates its full power when generalised to a statement about inner products. In this regard, the inequality has a nice geometric interpretation, and moreover can be derived as a consequence of an optimisation problem. We will discuss these things in the next blogpost!

For the curious reader, I have written a set of notes on the essential vector concepts taught in the HSC syllabus.

One thought on “Diversions in Mathematics 3a: The Cauchy-Schwarz inequality (part 1)”