In the previous post, we introduced the Cauchy-Schwarz inequality purely as a statement about real numbers:

We proved this inequality using mathematical induction. However, one could argue that the method did not yield much insight. As our proof was entirely algebraic, it is not easy to see why the sums that appear in the inequality are reasonable quantities to consider, and there is no hint as to how they could arise naturally in mathematical problems. Therefore, in this post, we will look at the Cauchy-Schwarz inequality from a geometric perspective, which reveals a much richer structure. Moreover, we will see how the inequality arises naturally from a basic optimisation problem.

Note: I continue with the convention from the previous post that a quantity is called positive if

. We say that

is strictly positive if

.

The dot product

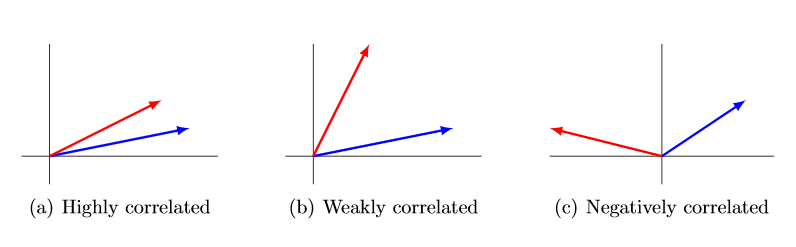

Mathematics is all about discovering patterns and relationships between objects (they can be abstract mathematical objects or indeed real-life objects!). Given two vectors, it is quite natural to ask: how can we describe the relationship between them? Can we quantify what it means for a pair of vectors to be “close” to each other? Since we often think of vectors as arrows in space that encode a direction, it makes sense intuitively to consider “closeness” of two vectors as a measure of “alignment” between their respective directions. Here are some pictures to illustrate what I mean:

We are therefore looking for some operation that will take two vectors as inputs, and outputs a measure of correlation. If the vectors have unit length, then this operation should also return something that is directly connected to the angle between the vectors. It turns out that the most sensible definition for such an operation is the dot product, defined by:

where is the angle between the two vectors. You may wish to consult Section 3 in my notes on the dot product for a detailed discussion. Notice the dot between the two vectors

and

— we do not write

(so it is not a “multiplication”). More importantly, observe that the dot product between two vectors produces a scalar quantity (a real number, not another vector!). This makes sense — after all, our aim was to measure correlation between vectors, and a vector quantity doesn’t seem like a reasonable solution for this purpose.

If we are given the vectors in terms of their coordinates, say and

, then one can derive the formula

with a bit of trigonometry. To see the details, I refer you again to my notes, where the calculation is done for 3-dimensional vectors, since the notes were designed for HSC students (although the method I used will work in any number of dimensions). Thus the dot product is exactly what appears on the left hand side of the Cauchy-Schwarz inequality! It was already mentioned in the previous post that the right hand side is equal to , the product of the lengths of the vectors. Consequently we can restate the Cauchy-Schwarz inequality in an elegant, compact manner:

Let

Schwarz’s proof

I believe the following proof is due to Hermann Schwarz himself (although I haven’t actually researched this, so I’ll flag this line with [citation needed]). The proof is so slick it almost feels like cheating!

Proof.

Define the function

Using the fact that for all vectors, we can expand the definition to discover that

is actually a quadratic function:

(You are encouraged to fill in the details). However, the squared length of any vector is always positive, so that for all

. It follows that the quadratic

can have at most one real root. Recalling what we learned in high school about quadratic equations, this means that the discriminant of

must be negative. Precisely, we have the following necessary condition:

Therefore . After cancelling the factor of 4, notice that we can take the square root on both sides, since all quantities involved are positive. Hence we obtain the result

.

Exercises / Discussion for students

- Fill in the details in the proof above, making sure to use the properties of the dot product carefully (e.g. see Section 2 in my notes).

- Determine the conditions for equality to hold in the Cauchy-Schwarz inequality. Here’s one way to do it: notice that the argument above shows that equality holds if and only if

. It is also useful to approach this geometrically: since the dot product is supposed to measure correlation between vectors, the condition

would mean that the vectors are “maximally” correlated. Give a geometric description of this situation.

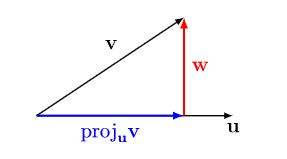

- Given two non-zero vectors

, the projection of

onto

is given by the formula

(see the picture below). Calculate the length of the orthogonal complement

and hence produce a short geometric proof of the Cauchy-Schwarz inequality.

In the next section, we will give yet another proof of the Cauchy-Schwarz inequality that highlights some different techniques.

Cauchy-Schwarz via optimisation

The epsilon trick

You may recall previously that we tried to derive the Cauchy-Schwarz inequality starting with the elementary AGM inequality for all real numbers

. This did not directly yield the result, but there is a sneaky trick that we can employ. Observe that the left hand side

is unchanged if we rescale

and

, where the Greek letter epsilon

denotes an arbitrary, strictly positive number. In this way, we get some extra information “for free” by applying the AGM inequality to these rescaled quantities:

This epsilon trick is a humble but very useful tool in mathematical analysis! Notice that it has created a certain asymmetry in the inequality. On the left hand side, we have a fixed value , but the right hand side now has a free parameter

. We may as well write

to simplify things.

Making use of the free parameter, we can try to optimise the right hand side, i.e. we seek a value of that will make the quantity on the right hand side as small as possible. We have a simple calculus exercise.

Exercise for students

Let be given. Prove that the function

defined for all , achieves its minimum value

at

.

Of course, the result of the exercise should not be surprising at all, given what we know about the AGM inequality. However, when used in the context of vectors, the result is more interesting. We show how the epsilon trick and the optimisation argument just presented can be used to give an alternative proof of the Cauchy-Schwarz inequality.

Proof.

Let and

be any two vectors. Then

using the AGM inequality and the length formula for vectors. For any , we may rescale

and

Consequently

where we set as before. On the right hand side of the inequality, we have exactly the function

from the exercise above, with the positive real numbers

and

. Using the result of that exercise, the minimum value of the function is

, so we deduce

To complete the proof, notice that we can replace by

without affecting the right hand side. Geometrically, this corresponds to a rotation of 180 degrees around the origin, and of course this operation does not change the length of the vector. Hence we also have

. We combine the two inequalities to obtain

(here we use the fact that is equivalent to

and

, where

are any real numbers). This concludes the proof.

A note on correlation

To wrap up this blogpost, I would like to revisit the remarks made at the beginning, where I introduced the dot product as a way of measuring the correlation between two vectors. There, I used the term “correlation” merely as an analogy, but in fact this can be interpreted properly in the context of probability theory and statistics. More specifically, let be a random variable — informally speaking, this is a function which assign a real number to each outcome of a random experiment. Let

denote the expectation (or expected value, or mean) of

. The variance of

is then defined as

In other words, it is the average squared difference between and its mean value. Let us introduce two more fundamental concepts in probability and statistics. The standard deviation of

is defined to be the square root of the variance, and is denoted by

. Given two random variables

, the covariance of

and

is defined as

Then we have the following inequality, which is essentially a probabilistic version of Cauchy-Schwarz:

The correlation between two random variables is then defined by

Closing comments

In this blogpost and the previous, we have looked at three different proofs of the Cauchy-Schwarz inequality: the first proof used only basic algebra and an induction argument, the second proof used vectors and a slick algebraic argument, and the third proof employed a sneaky but simple optimisation argument. Moreover, the reader was invited to derive a fourth, geometric proof in the exercises. These proofs only give a tiny snapshot of the study of inequalities. The Cauchy-Schwarz inequality is one of the most fundamental results in mathematical analysis, and admits vast generalisations. For a taste of what is possible, I recommend the book The Cauchy-Schwarz Masterclass by J.M. Steele.